Every machine learning seismologist who has ever attempted to simply download a high-performing deep neural network trained natively on California tectonic earthquakes, copy the weights, and apply it directly to Oklahoma induced seismicity has immediately encountered the exact same uncomfortable result: model performance degrades, and sometimes it degrades dramatically.

The algorithms don't break, but the physics of the data has fundamentally changed. The underlying waveform statistics are subtly different. The magnitude-frequency distributions are radically different. The focal mechanisms, the source depths, and the local attenuation structures are entirely distinct.

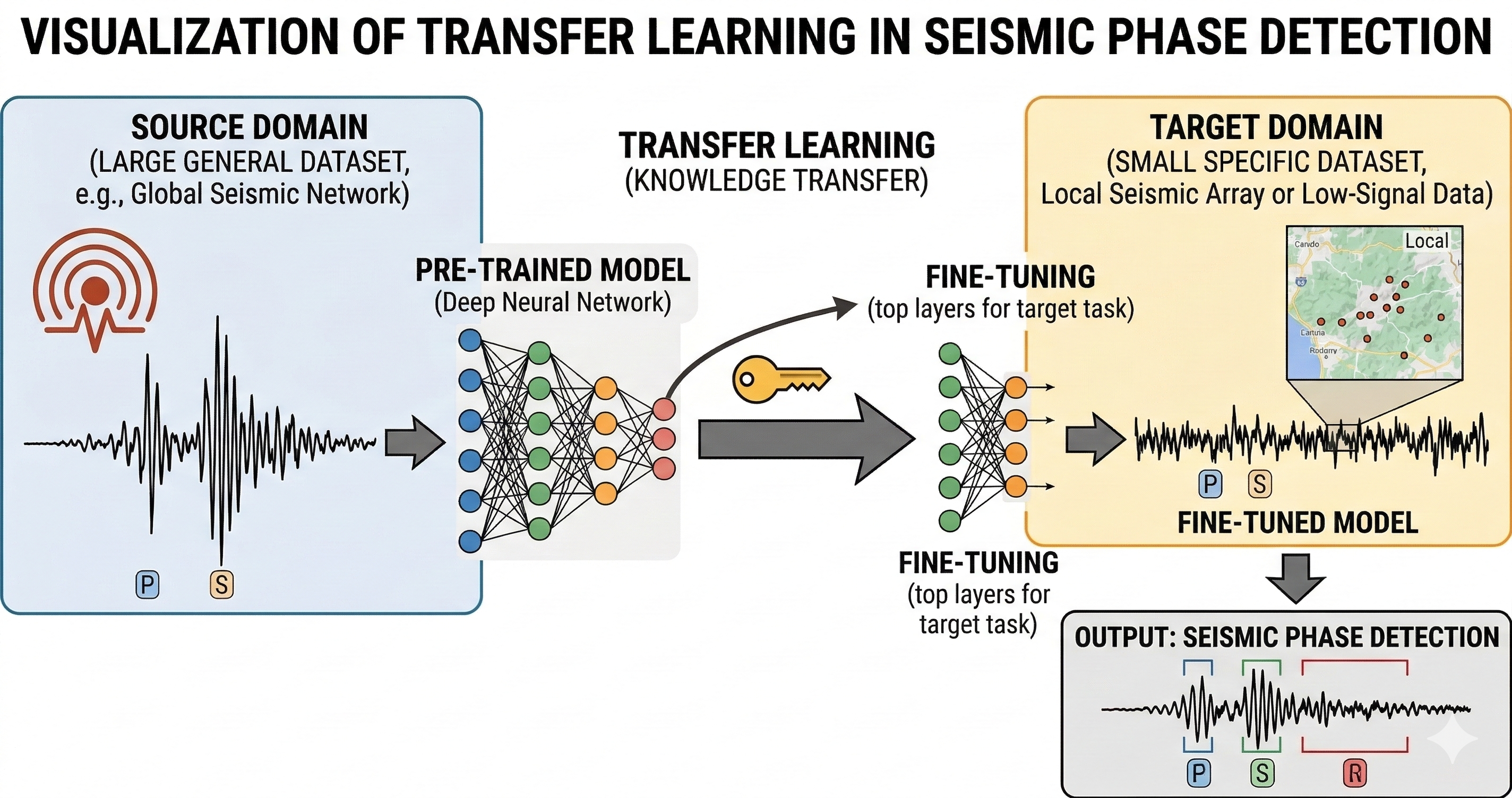

This phenomenon is known as domain shift — the harsh statistical gap between the source distribution a model was originally trained on and the target distribution it is being asked to generalize to in production. Transfer learning does not magically eliminate domain shift, but it provides the principled, mathematically sound tools required for adapting AI across it.

The Physics of Seismic Domain Shift

To understand why models fail across regions, we must look at the wave propagation physics. A seismic signal recorded at a station is a convolution of three things: the source mechanism (the slip on the fault), the path effect (geometric spreading and attenuation through the crust), and the site effect (the highly localized shallow geology directly beneath the sensor).

A model trained on the massive Southern California Seismic Network (SCSN) catalog implicitly learns the path and site effects of California. When applied to Oklahoma—where earthquakes are primarily shallow events induced by wastewater injection, traveling through vastly different mid-continent sedimentary geology—the neural network encounters feature distributions it has entirely never seen before. High-frequency attenuation is different. Coda wave decay is different.

What Actually Transfers in a Neural Network?

In deep convolutional neural networks (CNNs) designed for seismic phase picking, such as PhaseNet or EQTransformer, learning is hierarchical. The lower computational layers (those closest to the raw input waveform) learn highly generic, fundamental waveform features: basic onset sharpness, raw frequency content, simple phase shifts, and generalized amplitude envelope shapes.

These foundational features tend to transfer brilliantly across seismic domains because the fundamental physics of compressional P-waves and shear S-waves share overarching physical characteristics regardless of the localized tectonic setting. A sharp onset is a sharp onset.

The higher network layers, however, encode much more complex, domain-specific decision boundaries, heavily biased by the training region's specific noise profiles and path effects. These layers almost always need aggressive retraining — or at minimum, fine-tuning — when deploying a model to a new seismic region.

Oklahoma as a Target Domain

Oklahoma's induced seismicity presents severe and unique challenges to pre-trained models. Because the events are driven primarily by industrial wastewater injection from oil and gas operations, the events possess unique signatures. They tend to cluster intensely in both space and time (often presenting as overlapping swarm events). Their magnitude ranges skew significantly lower, generating microseismicity that barely pierces the noise floor, and the regional velocity model differs substantially from the West Coast.

Consequently, massive foundation models pretrained on global or California-centric datasets like STEAD (STanford EArthquake Dataset) or the SCSN catalog require careful, structured adaptation before they can realistically perform at the level of a human analyst on local data from the Oklahoma Geological Survey (OGS) network.

In my work evaluating transfer learning and knowledge-distilled models for edge deployment, I consistently found that taking a STEAD-trained model and fine-tuning it on a regionally curated Oklahoma subset — even a relatively tiny dataset of a few thousand labeled events — closed roughly 80% of the target performance gap. The remaining gap typically resided entirely in very low-magnitude detection, where the Signal-to-Noise Ratio (SNR) is so inherently low that the domain shift between regional noise profiles becomes the dominant factor.

Data Efficiency and Few-Shot Adaptation

One of transfer learning's greatest practical, engineering advantages stands outside of pure accuracy: it is incredibly data-efficient. Fully supervised training of a robust, generalized phase picker entirely from scratch typically requires hundreds of thousands of meticulously labeled examples. Gathering this much data requires thousands of hours of expensive analyst time.

Transfer learning via targeted fine-tuning can achieve comparable, and often strictly superior, performance using only a few thousand newly labeled examples from the target domain.

For local or under-funded regional seismic networks where historical labeled catalogs are incomplete, spotty, or inconsistently annotated, transfer learning is not merely a convenient optimization trick — it is often the only technically and financially viable path to deploying deep learning in production.

Be the first to respond.