For the vast majority of the deep learning era, true artificial intelligence lived almost exclusively in the cloud. Massive, multi-billion-parameter models ran on liquid-cooled server racks filled with thousand-dollar GPUs, consuming kilowatts of power and requiring relentless, high-bandwidth internet connections. TinyML inverts this paradigm entirely — it brings trained neural networks out of the data center and down to silicon chips that run on mere milliwatts.

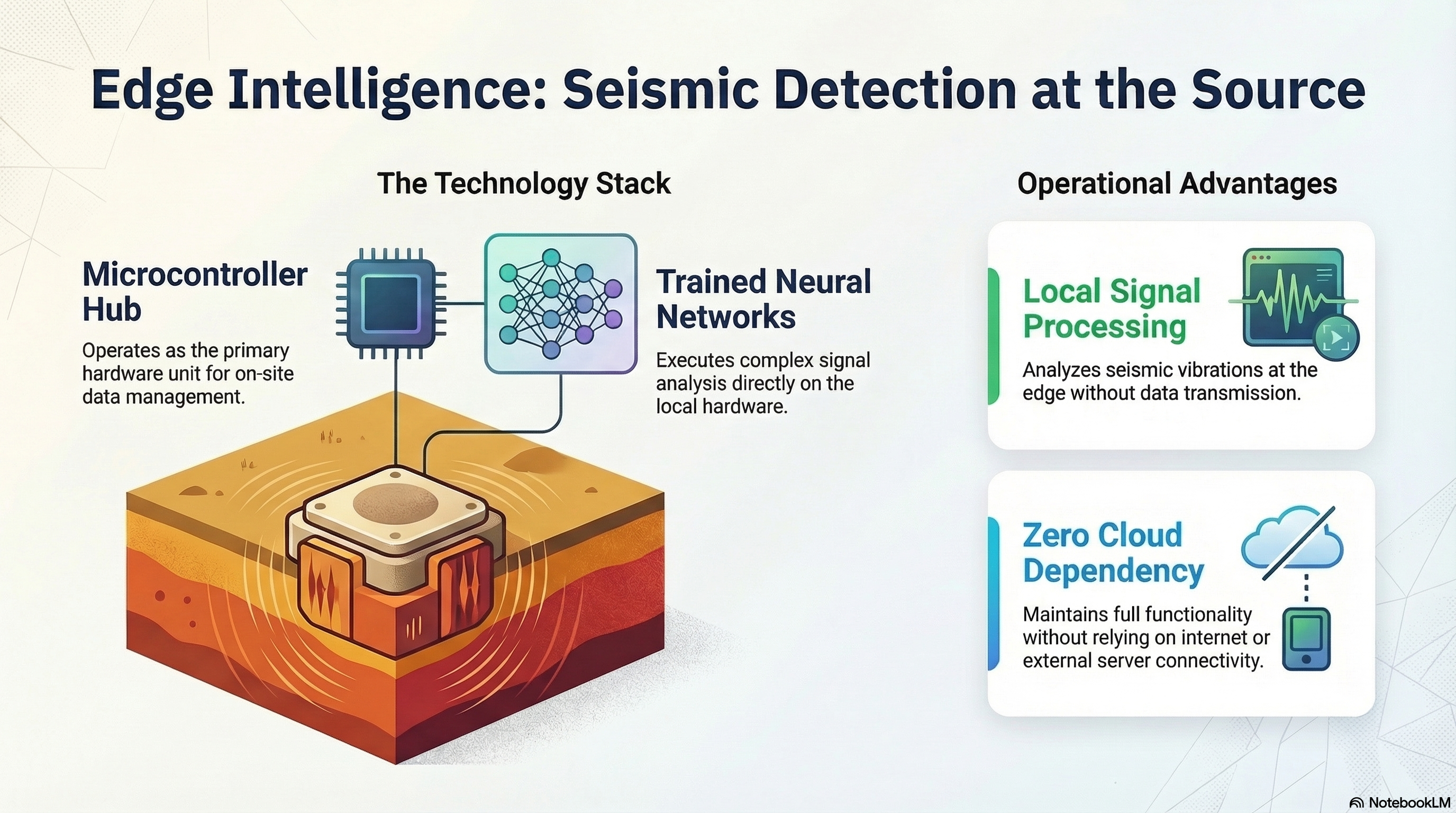

This shift is not just a parlor trick; it is an infrastructural necessity because the physical world generates data at the sensor edge, not in server farms. A battery-powered seismometer staked in a remote Oklahoma field doesn't benefit from a 200ms round-trip to a cloud inference API. It needs categorical answers ("Is this an earthquake or a truck?") in single-digit milliseconds, on-site, even when cellular connectivity drops completely in such locations.

What Makes TinyML Different: The Tyranny of Constraints

TinyML is not simply "smaller ML." It requires radically rethinking the entire model lifecycle — from the initial architectural design and dataset curation down to memory-constrained C++ deployment and rigorous, power-aware CPU scheduling.

When developing for the cloud, adding an extra dense layer to squeeze out 1% more accuracy is trivial. When developing for a microcontroller like an ESP32 or a Cortex-M4, that extra layer might physically exceed the chip's PSRAM. An edge device must typically fit its entire operating system, networking stack, data buffer, and neural network within roughly 2MB / 4MB / 8MB of PSRAM and perhaps 4MB / 8MB / 16MB of flash memory. Every single byte is violently contested.

The discipline of TinyML forces an engineering rigor that cloud AI rarely demands: you cannot simply pay AWS to brute-force your way past poor architectural decisions. You must optimize.

From 32-bit PyTorch to INT8 TFLite Micro

The typical deployment pipeline for a TinyML project begins exactly where a cloud project does: with a floating-point (FP32) model trained in PyTorch or TensorFlow on high-performance GPUs. However, deploying 32-bit floats to a microcontroller is computational suicide. Floating-point math on edge chips without dedicated FPUs (Floating Point Units) is painfully slow and drains batteries rapidly.

The solution is Post-Training Quantization (PTQ). This mathematical process converts the 32-bit floating-point weights and activations of the neural network down to 8-bit integers (INT8). This instantly and massively shrinks the memory footprint of the model. Crucially, when done correctly with a representative calibration dataset, this drastic compression results in nominal, often imperceptible accuracy loss.

The resulting quantized TensorFlow Lite (.tflite) model file is then compiled

into a literal C++ byte array using tools like xxd. This array is

embedded directly into the microcontroller's firmware, where the TensorFlow

Lite for Microcontrollers (TFLM) engine interprets the byte array and executes

the graph. No file systems. No dynamic memory allocation. Just raw,

deterministic execution.

Advanced Hardware and Micro-Accelerators

The hardware ecosystem supporting TinyML is evolving at breakneck speed. Historically, edge inferences were calculated on general-purpose ARM Cortex-M CPUs. Today, we are seeing the rise of low-power microNPU (Neural Processing Unit) accelerators baked directly into edge silicons.

These dedicated hardware blocks allow a network that formerly took hundreds of milliseconds to execute on a Cortex-M4 to execute in 20-30 milliseconds on the microNPU, drawing a fraction of the power. This allows for always-on ML—such as continuous wake-word detection or continuous seismic ambient noise monitoring—operating entirely on a coin-cell battery for years.

The Implications for Scientific Monitoring Networks

Distributed TinyML fundamentally restructures what is possible in large-scale scientific sensor networks. Historically, deploying 1,000 seismic nodes meant establishing immense cellular data contracts to stream raw 100Hz waveforms back to a central server for processing.

With TinyML, each node pre-qualifies data locally. The sensor constantly runs an INT8 CNN or FP16 distilled model to detect P-wave and S-wave arrivals. Instead of streaming continuous gigabytes of noise, the sensor sends a 15-byte MQTT packet reading: {"event": "P-Arrival", "confidence": 0.94, "timestamp": 1710777123}.

The systemic advantages are shocking. Cellular bandwidth requirements collapse to less than 1% of the original. This astonishing reduction occurs because the sensor no longer transmits a continuous 24/7 firehose of raw 100Hz background noise. Instead, the TinyML model acts as an intelligent gatekeeper, silently discarding hours of empty signals locally. The power-hungry radio is only briefly woken up to transmit a tiny, predefined text payload during the rare moments when an actual event is confidently detected. Consequently, battery life extends from weeks to years. Most importantly, the physical network becomes totally resilient to connectivity gaps — a critical property for remote, off-grid research or post-disaster deployments where infrastructure is severely compromised.

The tradeoff is, of course, very real: edge models are inevitably simpler and less expressive than their massive cloud counterparts. But as aggressive compression techniques like Knowledge Distillation, structured pruning, and hardware-aware quantization with curated calibration improve, the fidelity gap between the data center and the microcontroller is narrowing faster than expected.

Be the first to respond.