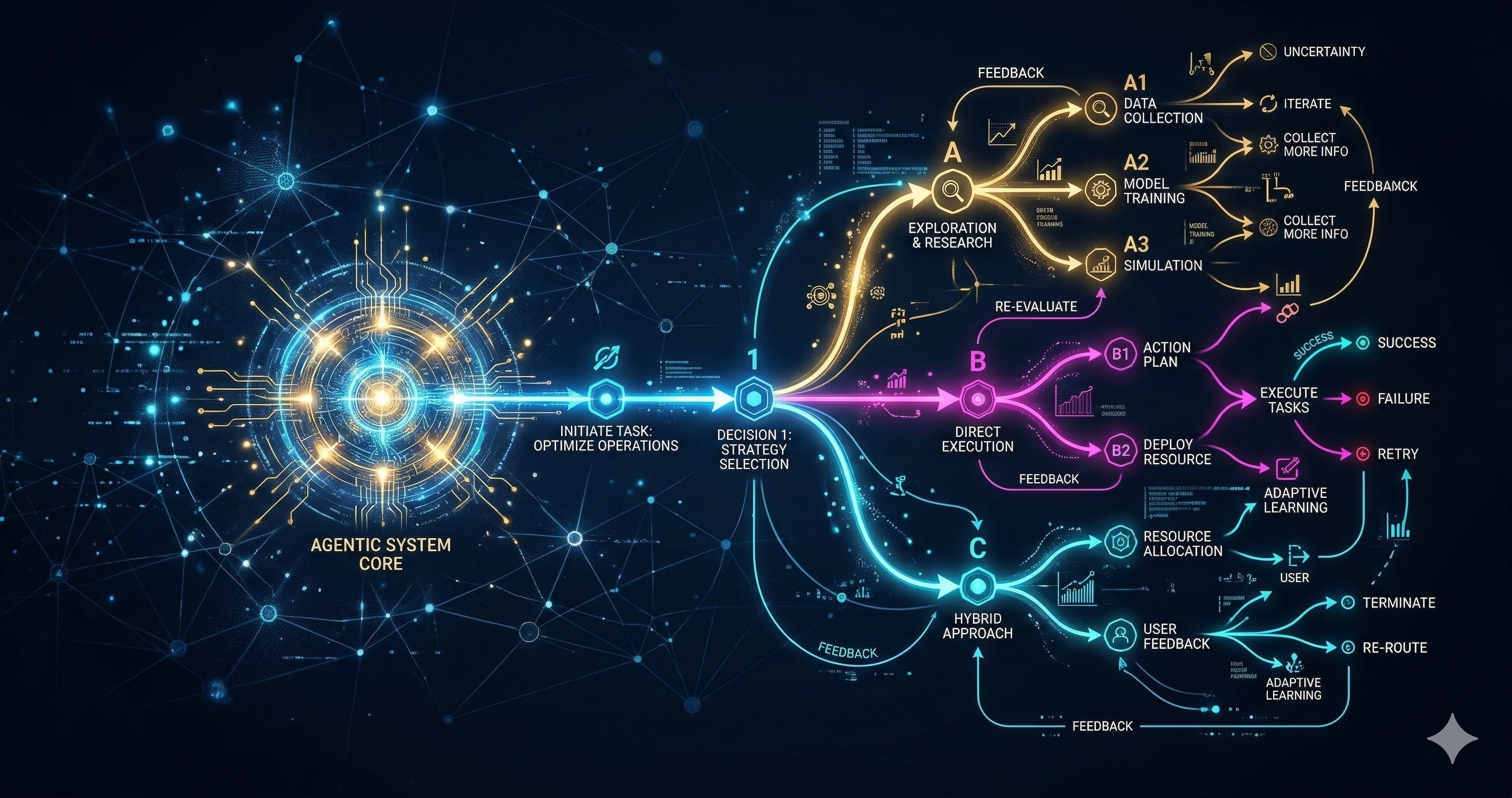

For the past several years, mainstream artificial intelligence progress was measured almost entirely by better text prediction and generation. A foundation model's utility was defined by its ability to answer a prompt, summarize a document, or generate a block of code within a static chat interface. Agentic AI violently expands that paradigm by combining deep reasoning, autonomous tool use, and dynamic environmental feedback into a continuous, closed loop.

In practice, this means an AI model is no longer just a passive oracle waiting to answer a question. It is an active participant that can autonomously decompose high-level goals ("Review the recent literature on Oklahoma seismicity and draft a summary"), execute sequential tasks, navigate failure states, and adapt its approach when real-world outcomes differ from its internal expectations. This shift is not just a minor product feature update; it fundamentally changes how we design, deploy, and govern intelligent systems across every industry.

The ReAct Pattern in Production

The backbone of almost every modern autonomous agent is the Reason + Act (ReAct) loop. A practical agent repeatedly cycles through three phases: Reason (analyzing the current state and formulating a plan), Act (invoking a specific tool or API), and Observe (reading the output of that tool).

This tight feedback loop is what enables self-correction and task persistence. However, it also introduces massive compounding failure risks when intermediate assumptions are inherently wrong. A single miscalibrated reasoning step early in the chain—such as misinterpreting the output of a SQL query—can send the agent spiraling down a path that requires significant backtracking, or worse, leads it to complete the task confidently but entirely incorrectly.

What radically distinguishes production-ready agents from impressive-but-fragile research demos is robustness to this systemic drift. The most reliable implementations treat each intermediate step as a strict checkpoint: verify the state, mathematically validate the output if possible, and only proceed to the next step if the result passes a lightweight, deterministic self-consistency test.

An agent's true quality is measured less by its ability to generate a single brilliant answer, and much more by the resilience and logical quality of its iterative decisions under severe uncertainty.

Tool Use as an Interface Problem

Giving a large language model autonomous access to computing tools — live web search, terminal execution, file system read/writes, and external SaaS APIs — is not simply a matter of wiring up JSON function calls. The model must possess the semantic understanding to know exactly when to invoke a tool, what strict schema inputs to supply, and profoundly, how to interpret structured outputs (like a massive JSON array or a core dump) that bear absolutely no resemblance to the natural language it was trained on.

This is fundamentally an interface design problem. Tool schemas that are written too permissively lead to wild, speculative API calls that burn compute and rate limits. Schemas that are too overwhelmingly narrow lead the model to work around the constraints in highly creative but highly undesirable ways (e.g., trying to write a Python script to bypass a restricted API). The most reliable and safest agentic setups are those where tools have incredibly narrow, perfectly described contracts and the model's statistical confidence threshold for invoking them is explicitly calibrated and tested.

Python code interpreters are the most potent example. When an agent can execute arbitrary Python code in a secure sandbox, it gains genuine, boundless analytical capability. It can run complex Pandas computations, train basic Scikit-learn models, validate results, and iterate graphically. But it also introduces a terrifying new failure mode: code that executes successfully (returning exit code 0) but silently implements the completely wrong mathematical logic — leaving no visible stack trace or error to trigger a retry.

From Solitary Agents to Multi-Agent Coordination

A natural, inevitable extension of single-agent systems is a distributed network of autonomous agents with highly specialized roles. One agent handles literature retrieval, another mathematically synthesizes the data, a third acts as an adversarial critic attempting to find flaws in the logic, and a final agent drafts the overarching report. This mirrors exactly how highly productive human research teams work, and it provides a principled architectural way to scale reasoning far beyond what a single model's context window can structurally hold.

In practice, however, multi-agent systems introduce an entirely new class of failure: coordination breakdown. When one agent's flawed output becomes a downstream agent's absolute input, errors rapidly propagate and amplify across the network. Trust calibration — an agent knowing exactly how much mathematical weight to give a peer agent's output before confidently acting upon it — remains a deeply open research problem. Current production approaches rely heavily on structured, hard-coded handoffs and explicit, deterministic verification steps rather than fluid, organic agent-to-agent trust negotiation.

Implications for the Scientific Frontier

In advanced research and scientific workflows, agentic systems hold the immense promise to accelerate data synthesis, experimental evaluation, and computational reproducibility. A well-designed agent pipeline can autonomously monitor a live literature feed, instantly flag relevant new pre-prints, generate critical summaries against internal knowledge bases, and link claims to prior experimental work — rote, exhausting tasks that currently consume massive blocks of researcher time.

The most pressing challenge for the immediate future of Agentic AI isn't raw reasoning capability — it's strict controllability. Building autonomous agents that are intellectually powerful enough to be genuinely useful, but operationally constrained enough to be deeply trustworthy, is the defining software engineering problem of this decade.

Be the first to respond.