Reliable Artificial Intelligence systems must be able to communicate not only what they confidently predict, but exactly how certain they are in that prediction. In almost all high-stakes scientific and industrial research contexts, internal calibration errors can be significantly more damaging than obvious, catastrophic mistakes. A confidently generated but entirely wrong answer prematurely closes scientific inquiry, while an honest, mathematically bounded uncertainty signal invites necessary human scrutiny and further testing.

Uncertainty-aware reasoning helps engineering and research teams distinguish reliably between confident empirical inference, highly plausible speculation, and completely unsupported output. That distinction is relatively easy to make in a tightly controlled benchmark or sandbox setting. It is extraordinarily harder in actual deployment, where the model routinely faces out-of-distribution queries it was never explicitly trained to handle.

Calibration as a First-Class Metric

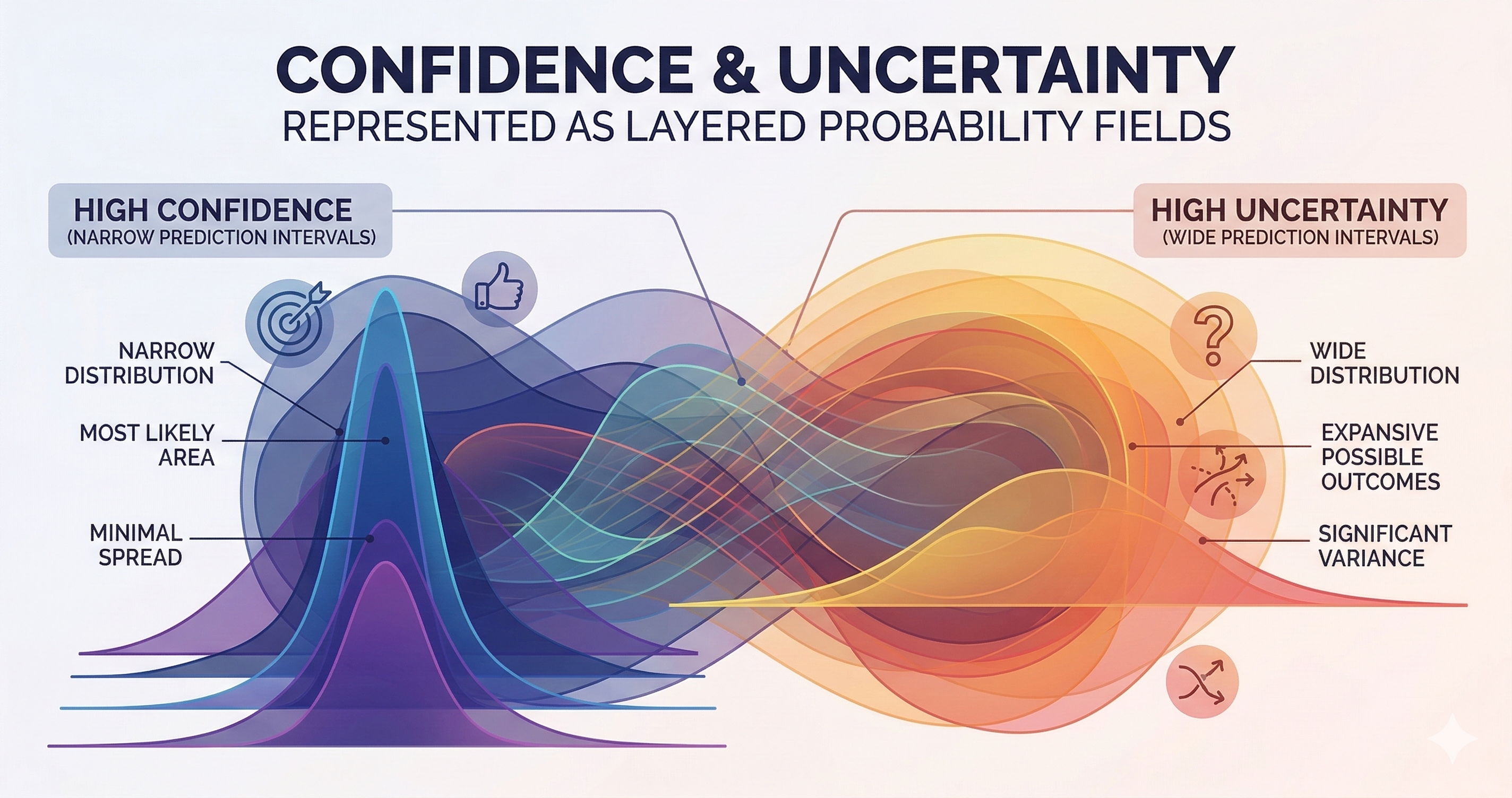

System evaluation algorithms should rigorously track confidence alignment with objective correctness — not just raw accuracy in isolation. The gold standard metric for this is Expected Calibration Error (ECE). ECE measures the mathematical gap between a model's internally stated confidence probability and its actual accuracy across various confidence bins over a large dataset.

Simply put: a model that predicts its answers with 80% confidence should be demonstrably right roughly 80% of the time. If it is only right 40% of the time when claiming 80% confidence, it is fundamentally uncalibrated. Unfortunately, most modern Large Language Models (LLMs) are systematically overconfident, particularly on factual recall tasks where the underlying pre-training data is incredibly dense and repetitive.

An AI model that says "I don't know" or "I cannot determine this from the available context" appropriately is almost always more useful than one that answers every prompt with unyielding, high-temperature confidence. The core challenge is that LLMs are heavily trained on internet text data where explicit hedging is often associated with low-quality, unauthoritative writing. Uncertainty markers in human text correlate strongly with tentativeness, not mathematically strict epistemic accuracy. This creates a deeply flawed training signal (usually via Cross-Entropy Loss) that inadvertently penalizes calibrated, honest hesitation.

Hallucination Is a Calibration Failure

"Hallucination" — the spontaneous generation of highly plausible but entirely false claims — is best understood not as an isolated output bug, but as a critical calibration failure. The model assigns an overwhelmingly high mathematical likelihood to an incorrect token completion because it has perfectly learned the statistical texture, the syntax, and the cadence of confident, fluent academic prose, entirely independent of factual grounding.

This issue is particularly acute in highly specialized knowledge-intensive domains like biology or geology. An LLM asked about a specific, obscure seismic swarm event or a hyper-recent regional network research paper will very often confabulate plausible-sounding details (inventing magnitudes, fabricating DOI numbers, or hallucinating author affiliations) because the prompt query pattern perfectly matches confident-sounding training text.

The gap between linguistic confidence and mathematical calibration is exactly where hallucinations live and breathe. The fix is not simply throwing "more data" at the model — it is explicitly training the model with a better signal about when to escalate systemic uncertainty rather than blindly interpolating from surface-level syntax patterns.

Architectural Approaches Worth Knowing

Several active research directions in the AI safety alignment community have made meaningful progress on tackling this calibration problem directly:

- Chain-of-Thought (CoT) Prompting: CoT can dramatically improve calibration by forcing the model to explicitly surface its intermediate reasoning steps. When the reasoning steps are placed in the context window, errors in logic become more visible to the model itself (allowing self-correction) and highly visible to the human observer.

- Retrieval-Augmented Generation (RAG): RAG strictly grounds the generation in explicitly retrieved external source documents. This allows the model's uncertainty to be expressed in terms of source confidence ("The retrieved USGS document does not specify...") rather than opaque parametric recall confidence.

- Verbalized Uncertainty: This involves explicitly fine-tuning and RLHF-training models to naturally output phrases like "I am not certain, but..." or "This is my best statistical estimate based on...". This has shown modest but incredibly real calibration gains in modern instruction-tuned models like Claude and GPT-4.

- Conformal Prediction: This offers a deeply rigorous statistical framework for generating specific prediction sets with guaranteed mathematical coverage. Instead of a single point prediction, a conformal predictor outputs a set of possible answers (e.g., a bounding box of magnitudes) that is mathematically guaranteed to contain the true answer (e.g., 95% of the time), making it highly applicable to classification tasks where strict uncertainty bounds are an operational necessity.

Implications for Deep Scientific Work

In science and rigorous seismology workflows, uncertainty signals act as the critical triggers that indicate where human experts must review results, rerun physical experiments, or actively mobilize sensors to collect additional data. A magnitude estimate generated by an AI that arrives with a wide, explicit uncertainty bound (M 3.2 ± 0.8) is vastly more informative than an assertive, single-digit point estimate (M 3.2). The bound tells the analyst exactly how much attention or skepticism the event deserves.

This exact same guiding principle applies to Agentic AI-assisted literature summarization, hypothesis generation, and complex result interpretation. An AI that returns "Based on the three conflicting papers analyzed, the empirical evidence regarding this fault structure is significantly mixed" is immeasurably more useful to a researcher than one that magically synthesizes a confident, definitive conclusion by flattening out the ambiguous sources.

This makes stringent statistical calibration not just a dry technical metric for the ML engineering team, but a foundational design philosophy: we must build AI systems that make the exact shape, depth, and boundaries of their uncertainty perfectly legible to the humans working alongside them.

Be the first to respond.