A large language model (LLM) trained on a massive, static corpus of internet data will never natively know what was published last month. It cannot access the pre-print paper that contradicts its deeply ingrained confidence, the newly released dataset that would challenge its fundamental assumption, or the follow-up study that overturned the original finding it was trained on.

For casual text generation, creative writing, or drafting boilerplate code, this temporal limitation is manageable. But for rigorous scientific work—where accuracy, provenance, and up-to-date knowledge are paramount—a static knowledge cutoff is entirely disqualifying. Hallucinations are not just an annoyance in science; they destroy trust.

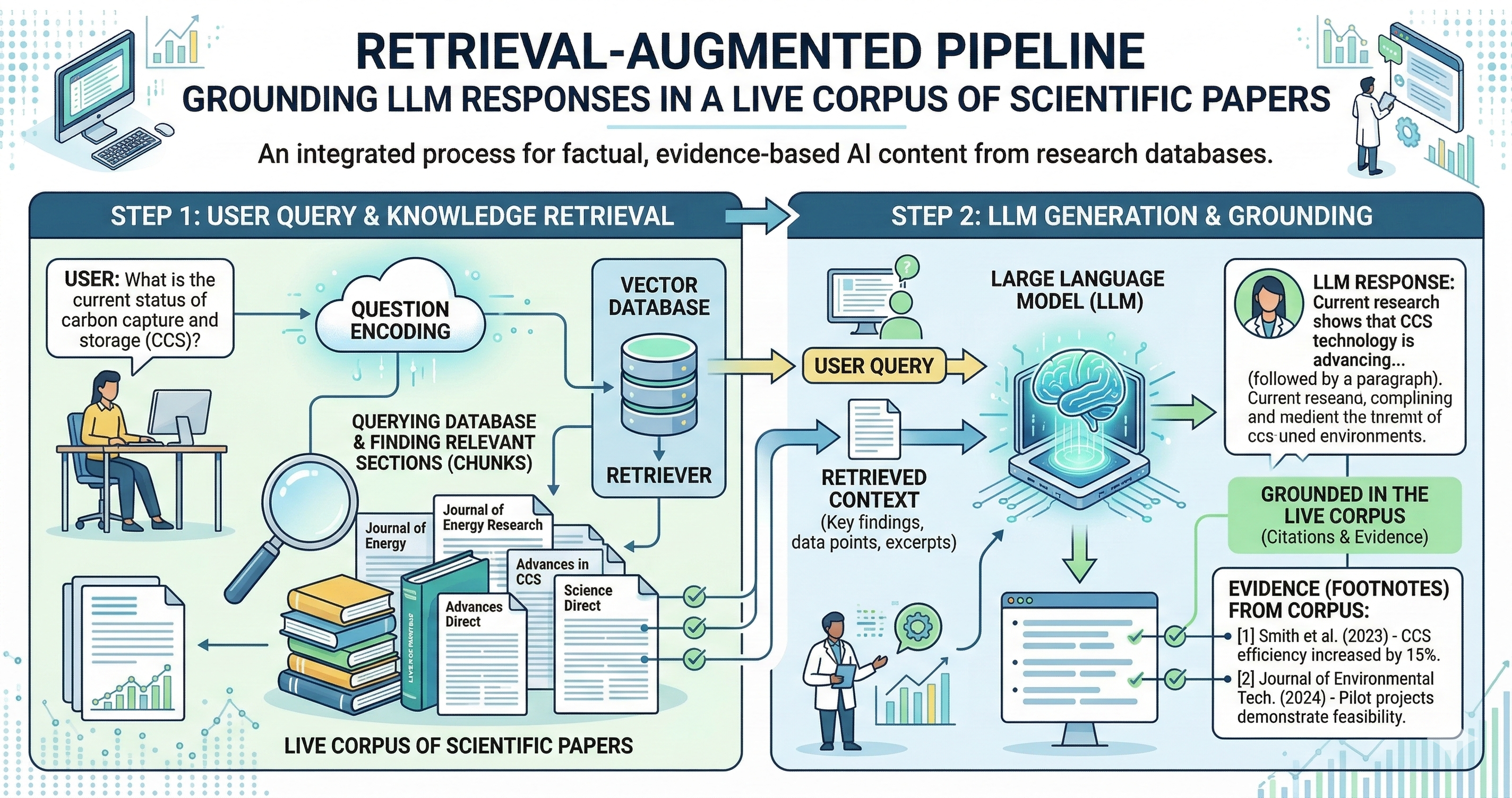

Retrieval-Augmented Generation (RAG) addresses this directly. Instead of asking the model to recall facts purely from its parametric memory (the weights and biases baked into the neural network during pretraining), a RAG system retrieves highly relevant external documents at query time, injects them directly into the LLM's context window, and strictly grounds the generation in that retrieved evidence. Rather than forcing the model to act as a database, RAG allows the model to act as it should: a powerful reasoning and synthesis engine. Every claim it makes becomes traceable to an explicit source.

The Architecture of a Scientific RAG System

A robust RAG pipeline for parsing scientific literature consists of three primary layers.

1. The Indexing Layer: The journey begins offline. Scientific papers (PDFs), preprints (arXiv/EarthArXiv xml), and structured data records are ingested and processed. Because scientific papers are long and LLM context windows are finite, these documents are split into smaller, overlapping "chunks" (e.g., 500 tokens). A specialized embedding model then converts the semantic meaning of each chunk into a highly dimensional dense vector representation. These vectors, along with the original text and crucial metadata (authors, DOIs, publication dates), are stored in a specialized Vector Database (like Pinecone, Milvus, or FAISS).

2. The Retrieval Layer: This occurs dynamically at query time. When a user asks a question, their natural language query is passed through the same embedding model to create a query vector. The vector database performs an Approximate Nearest-Neighbor (ANN) search, rapidly comparing the query vector against millions of document vectors to find the closest semantic matches. The top K most relevant chunks are returned.

3. The Generation Layer: The final step is synthesis. The retrieved chunks are formatted and prepended to a system prompt handed to the LLM. The prompt explicitly instructs the LLM: "Using only the provided context snippets, answer the user's question. If the context does not contain the answer, state that you do not know." The LLM then generates a coherent response heavily conditioned on that retrieved, factual context.

The retrieval step does not just add missing information — it fundamentally changes the epistemic status of the language model's generation. Claims are now strictly conditional on verifiable evidence, not on the model's opaque prior beliefs.

RAG for Seismological Research

In mature, data-heavy physical sciences like seismology, the literature spans decades. Research is scattered across regional network reports, USGS bulletins, historical archives, and dense methodological papers across multiple journals. For a human researcher, comprehensive literature review is a grueling, multi-week undertaking. RAG has profound and immediate application here.

Consider a researcher asking: "What automated phase picking methods have been successfully applied to Oklahoma induced seismicity networks between 2018 and 2024?"

Normally, this query would require hours of keyword searching on Google Scholar, downloading PDFs, and skimming methodologies. A RAG system indexed on a curated seismological corpus can bypass this entirely. It surfaces highly relevant methodology comparisons, author affiliations, and specific dataset references in seconds.

More importantly, the grounded output is verifiable. Rather than an LLM confidently citing a fictional paper (a notorious problem for legacy models), a well-implemented RAG system returns responses with explicit, hyperlinked source attribution. The researcher can instantly follow the citations, check the source page, and critically evaluate the context in which the claim appears. The AI does not replace the researcher's judgment; it vastly accelerates their discovery pipeline.

Advanced RAG: Beyond Simple Vector Search

As RAG architectures mature, simple vector similarity matching is proving insufficient for highly complex scientific domains, where specific keyword matching matters just as much as semantic similarity.

Modern scientific RAG systems employ Hybrid Search, combining dense vector embeddings with sparse keyword algorithms (like BM25). This ensures that querying for a highly specific algorithm name like "STA/LTA" or "PhaseNet" returns exact matches, rather than just conceptually similar phrases. Furthermore, a Re-ranking step is often introduced. A fast, cheap model retrieves the top 100 documents, and a heavier cross-encoder neural network scores and re-ranks those 100 documents based on extreme contextual relevance, passing only the absolute best 5 chunks into the final LLM prompt.

The Limitations RAG Does Not Solve

Despite its power, RAG is not a magical panacea. The quality of the final generation depends entirely on the quality of the underlying index. "Garbage in, garbage out" applies rigorously. Poorly chunked documents that cut sentences in half, low-quality embedding models that fail to capture domain-specific jargon, or simply insufficient corpus coverage will all radically degrade downstream generation.

Retrieval also introduces a new, insidious failure mode: the model may faithfully and articulately ground its response in a retrieved passage that is itself factually incorrect, methodologically flawed, or severely out of date. The LLM generally assumes the retrieved context is empirical truth.

For agentic scientific workflows — where advanced AI architectures autonomously query literature to formulate novel hypotheses, design experiments, or evaluate competing models — robust, high-fidelity RAG infrastructure is no longer an optional enhancement. It is a foundational requirement. Getting the ingestion, chunking, and retrieval architecture right is every bit as critical as the choice of language model used at the end of the pipeline.

Be the first to respond.