When developing machine learning models, PyTorch is often the undisputed framework of

choice, thanks primarily to its dynamic computational graph and pythonic, intuitive API.

Modifying a model's forward pass on the fly, debugging tensor shapes row-by-row, and printing

gradients dynamically is an unmatched experience during the research phase. However, when it comes to

production deployment, relying purely on PyTorch's native

.pth format can create an unnecessary—and often massive—bottleneck.

Moving from PyTorch to the Open Neural Network Exchange (ONNX) format isn't just a compatibility step or a deployment formality—it is one of the most effective ways to drastically increase inference speed, often requiring no additional lossy optimizations.

The Cost of Dynamic Graphs

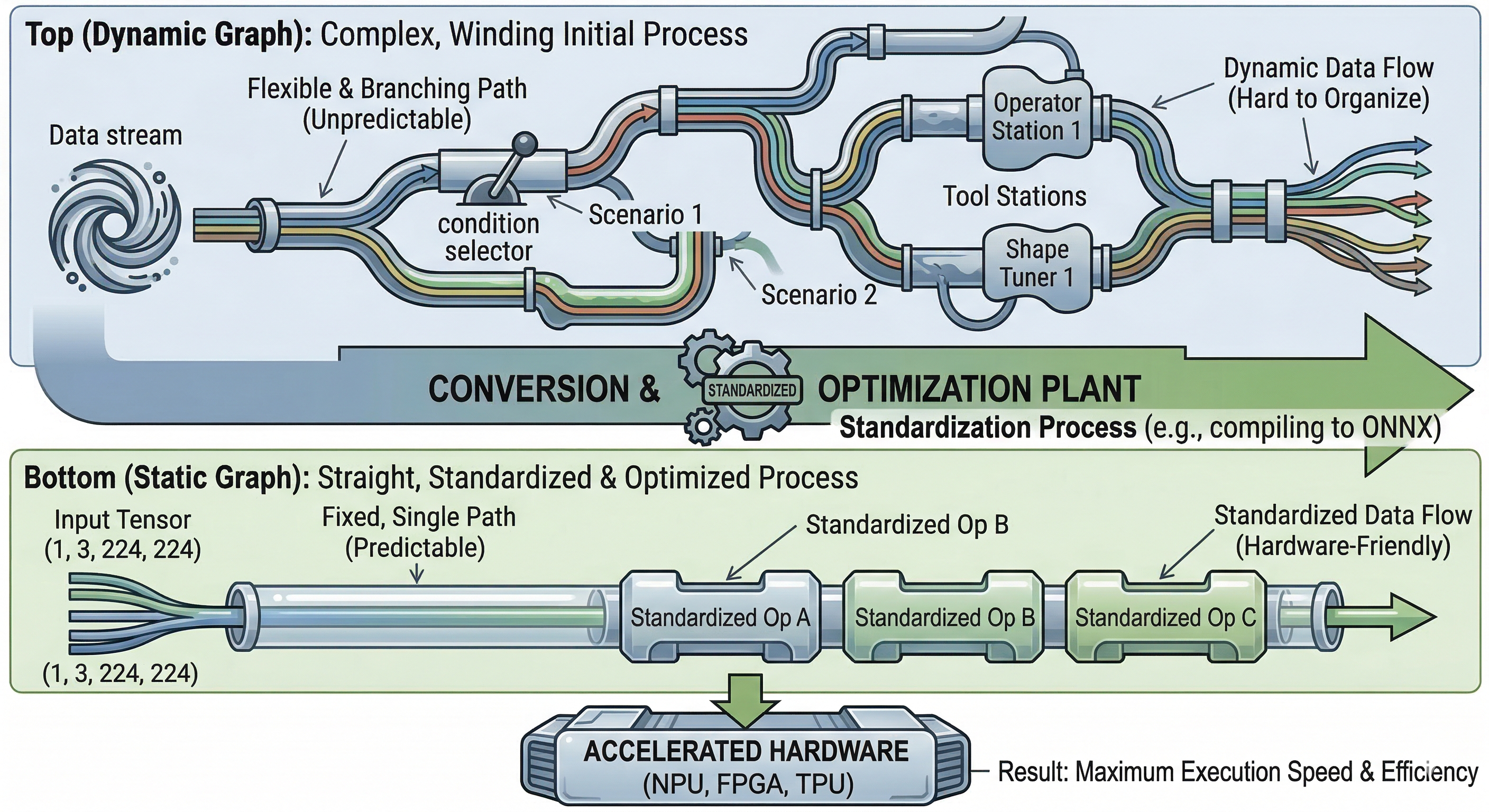

PyTorch's core strength is its eager execution mode, which relies on dynamic graph execution. Each

forward pass builds the computational graph dynamically under the hood, layer by layer. While

recent versions of PyTorch have introduced torch.compile() to bridge the performance

gap and cache these graphs, traditional PyTorch models incur significant Python overhead during every

inference step.

In a production environment where latency and throughput dictate the user experience, spending precious milliseconds interpreting Python code and dynamically formulating operations is a costly tradeoff for flexibility you no longer need. Once a model is trained, its architecture is virtually always completely frozen. Continuing to pay the "flexibility tax" of Python in production means burning compute resources that could otherwise be used to serve more concurrent requests.

Enter ONNX: Static Graphs and Execution Providers

Exporting a PyTorch model to ONNX fundamentally changes how the model is executed. ONNX represents the model as a static, directed acyclic graph (DAG). Because the entire graph structure is known beforehand, the ONNX Runtime (ORT) engine can apply extensive, aggressive graph-level optimizations before a single inference is ever run.

During product building, simply swapping your PyTorch inference pipeline to use ONNX Runtime can yield a drastic speedup—often 2x to 10x faster—even before exploring advanced techniques like INT8 quantization or layer pruning.

These optimizations include constant folding (pre-computing portions of the graph that rely entirely on static values) and node fusion. Node fusion is arguably the most impactful: if your network has a Convolution followed by a Batch Normalization and a ReLU activation, PyTorch might dispatch three separate kernels to the GPU. Each dispatch incurs overhead. ONNX Runtime fuses these into a single highly optimized kernel, massively reducing memory bandwidth pressure and latency.

Furthermore, ONNX Runtime is written entirely in C++ and connects directly to hardware-specific execution providers like CUDA, TensorRT, ROCm, or OpenVINO, entirely bypassing Python's Global Interpreter Lock (GIL). This allows you to serve models seamlessly in environments where Python might not even exist, such as edge devices, mobile platforms, or high-performance C++ backend servers.

Implementation Context: torch.compile() vs ONNX

With the release of PyTorch 2.0, torch.compile() has become the default recommendation for native PyTorch speedups. It utilizes TorchDynamo to capture graphs and TorchInductor to compile them into optimized Triton kernels.

While torch.compile() is brilliant for training and represents a massive leap for the ecosystem, ONNX still holds the crown for purely decoupled inference architectures. ONNX Runtime is highly portable. A .onnx file can be handed off to a completely different engineering team writing an inference server in Go, Rust, or C#. torch.compile, conversely, still deeply ties you to the Python/PyTorch lifecycle and environment.

Limitations and Edge Cases

Despite its advantages, transitioning to ONNX is not without friction. It is important to define the edges where ONNX breaks down:

- Dynamic Shapes: If your model relies heavily on highly dynamic input shapes (e.g., variable sequence lengths in NLP without padding), tracing the model into a static ONNX graph can sometimes fail or require complex dynamic-axis configurations that mitigate some of the speedup.

- Custom ATen Operators: If you write custom C++/CUDA operators in PyTorch, they will not natively translate to ONNX. You must write a custom symbolic function to map the operator to ONNX semantics.

- Control Flow: Data-dependent loops and if-statements inside the model's

forwardpass can be notoriously difficult to export reliably, as the trace often captures only the path taken by the dummy input during the export process.

Seamless Integration into Your Product

For the vast majority of standard architectures (ResNets, Transformers, YOLO variants), exporting a model in PyTorch is remarkably straightforward using

torch.onnx.export(). You provide the model and a dummy input tensor, and PyTorch traces the execution path.

Once exported, loading the model with

onnxruntime is simple and creates a clear separation of concerns

in your tech stack. Research scientists can continue experimenting in PyTorch, utilizing its incredible debugging ecosystem and eager execution. Meanwhile, the platform engineering team can deploy the frozen, highly optimized ONNX graph to production

environments, writing high-throughput inference servers using languages optimized for concurrency.

Even without taking the plunge into deep optimizations like quantization, distillation, or pruning, switching to ONNX provides an "out-of-the-box" performance injection. This makes it a crucial low-hanging fruit for any team looking to scale their AI product efficiently, reducing cloud costs and drastically lowering latency for end users.

Be the first to respond.