When neural-network pioneers Geoffrey Hinton, Oriol Vinyals, and Jeff Dean formally introduced the concept of Knowledge Distillation in 2015, their core insight was as elegant as it was deceptively simple: a rigorously trained neural network knows fundamentally more than its final, hardened predictions actually reveal.

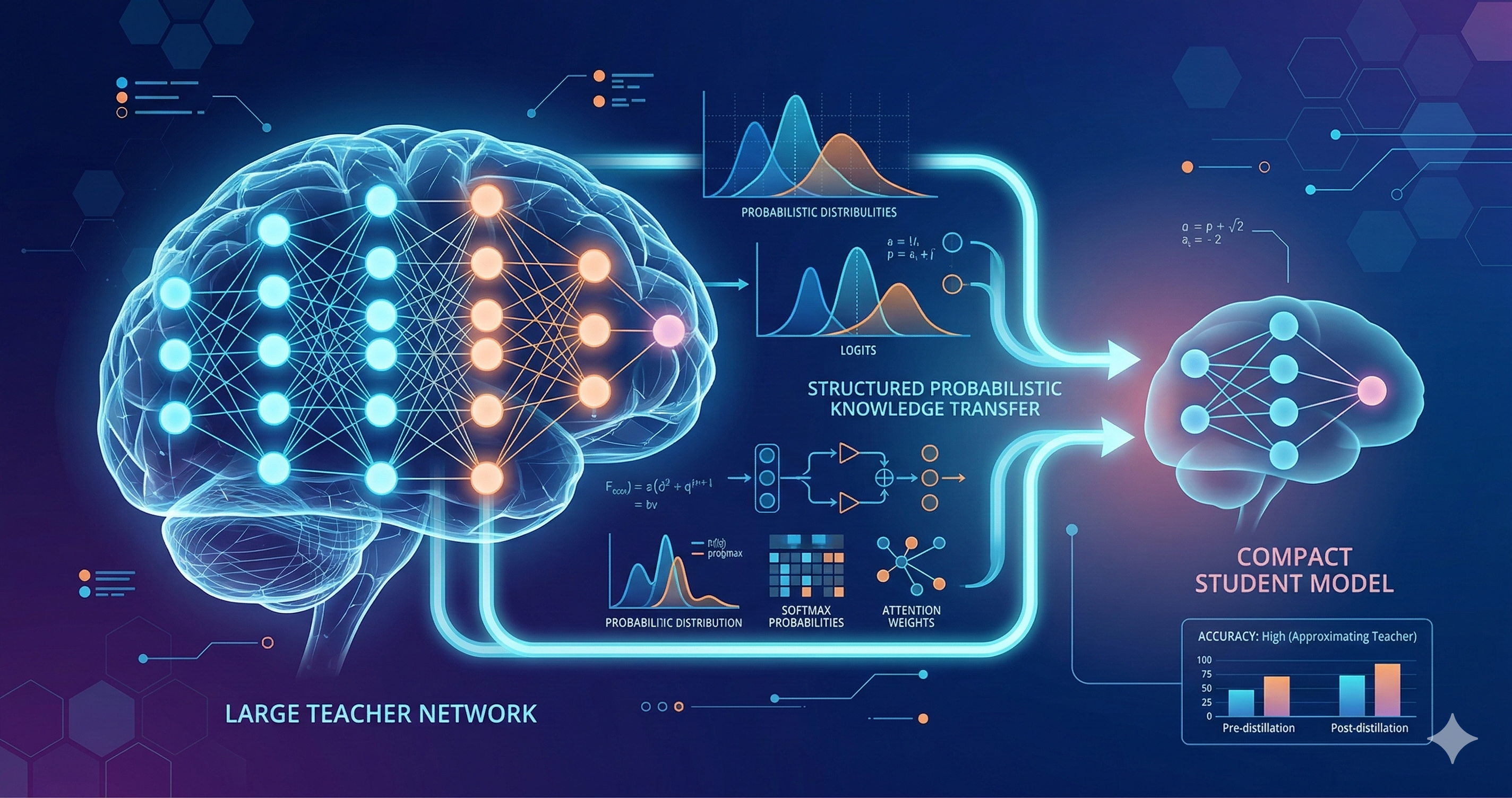

In a standard classification task, a model outputs a probability distribution over all possible classes. We usually only care about the class with the highest probability (the winning prediction). However, the remaining probability distribution across all the "losing" classes encodes profound structural relationships about the dataset—relationships that can be mathematically transferred to supervise the training of a vastly smaller, faster model.

"Dark Knowledge" and the Geometry of Errors

These non-winning probabilities, often referred to as "soft labels," carry what Hinton famously called "dark knowledge."

Consider an image classification model looking at an image of a BMW. The model might assign a 0.7 probability to Car, a 0.2 probability to Truck, and a 0.0001 probability to Carrot. A standard "hard" one-hot label simply says "This is 100% a Car and 0% everything else."

But the soft distribution is communicating something infinitely richer: the neural network has geometrically learned that a Car is visually much more similar to a Truck than it is to a Carrot. By forcing a small "Student" model to mimic this exact probability distribution (rather than just the hard binary label), the Student learns the intricate similarity structure of the entire input space, mapping relationships it could never discover on its own given its limited internal capacity.

The Mechanics of Distillation: Temperature Scaling

Training a student model under distillation combines two distinct loss objectives. The first is the standard cross-entropy loss against the actual ground-truth labels. The second — which is weighted by a hyperparameter — is the Kullback-Leibler (KL) divergence between the student's soft outputs and the teacher's soft outputs at an artificially elevated "Temperature".

Temperature scaling softens both probability distributions before comparing them. It artificially inflates the signal in the low-probability tails (making that 0.0001 probability for a Carrot slightly larger), giving the student model richer, non-zero gradient information about the underlying structure of the problem.

In practical implementations, the temperature parameter T is

typically swept between 2 and 10. Higher values transfer more aggressive

relational structure (smoothing out the distribution significantly); lower

values keep the Student closer to the Teacher's definitive, hard decisions.

Finding the right balance is entirely task-specific and requires empirical

tuning.

Distillation in Scientific Machine Learning

In geophysical applications — specifically where I focus on deploying P-wave and S-wave phase detection models to remote edge sensors — classical Knowledge Distillation offers a highly practical path to bringing AI into the physical field.

A massive, computationally expensive Teacher network (like the heavily parameterized EQTransformer or PhaseNet variants), trained on hundreds of gigabytes of complete historical Oklahoma datasets, can expertly supervise a highly compact, few-layer Convolutional Neural Network (the Student, such as XiaoNet) with simplified architecture. This Student model is intentionally designed to fit entirely within the 2MB/4MB/8MB PSRAM constraint of an ESP32 microcontroller.

Because it is trained via distillation, the tiny Student inherits the massive Teacher's calibration and its nuanced understanding of borderline, ambiguous cases — exactly the noisy, low-SNR events that matter most for real-time seismic monitoring. In rigorous lab tests with these distilled variants, we frequently observed accuracy preservation easily above 90% of the massive Teacher’s performance, all while operating at a tiny fraction of the compute latency and memory footprint.

Distilling the Unseen: Limitations and Caveats

Distillation is powerful, but it cannot fundamentally violate information theory. If the Student model's architecture is too severely constrained, no amount of Teacher supervision will allow it to represent the necessary decision boundaries.

Furthermore, a Student can only learn what the Teacher knows. If the Teacher model is poorly calibrated, biased against microseismicity, or prone to hallucinating arrivals in high noise, the Student will faithfully and flawlessly learn those exact same flaws.

Distillation vs. Quantization

It is a common misconception that Distillation and Quantization (converting 32-bit floats to 8-bit integers) are competing techniques. They are, in fact, entirely complementary.

Distillation compresses the model's architectural capacity (reducing the number of layers and parameters). Quantization compresses the model's numerical representation (reducing the byte-size of the remaining parameters). Used sequentially — distill a massive model into a lean architecture first, and then quantize those lean weights — they produce edge-deployable models that are both structurally elegant and numerically minuscule. Importantly, quantization provides a flexible dial: you do not necessarily need to aggressively compress all the way from FP64 down to INT8. Depending on the specific precision needs and the physical constraints of your target hardware, you can choose to quantize from FP64 to FP32, or down to FP16. This dual approach represents the cutting edge for deploying advanced scientific ML into severely battery- and memory-constrained field environments.

Be the first to respond.