As the Internet of Things (IoT) and widespread sensor technologies continue to mature, we are witnessing an unprecedented deployment of dense, large-scale sensor networks—commonly referred to as large N arrays. From environmental monitoring systems and smart grid infrastructures to high-resolution seismological networks and industrial automation, these arrays consist of thousands or even millions of individual nodes continuously sampling the physical world. The sheer volume and velocity of the data generated by these sprawling deployments hold immense potential for high-fidelity modeling, predictive maintenance, and real-time situational awareness. However, this explosive growth in data generation has exposed a critical vulnerability in traditional centralized data processing architectures.

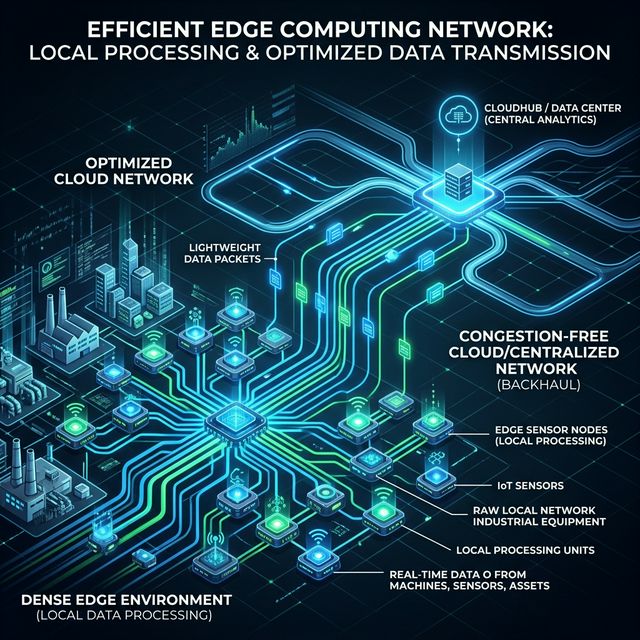

The prevailing paradigm for IoT and sensor network architecture has long relied on a centralized cloud computing model. In this framework, individual sensor nodes are relatively "dumb"; their primary function is to sample data and transmit the raw measurements over a network to a central server or cloud repository. There, powerful data centers aggregate, process, and analyze the incoming streams. While this architecture benefits from the virtually limitless computational power of the cloud, it introduces a massive communications bottleneck when applied to dense large N arrays.

When thousands of sensors simultaneously attempt to stream high-frequency, high-resolution continuous data to a central server, the aggregated bandwidth requirements quickly overwhelm available network infrastructure. This phenomenon results in severe network congestion. As the communication channels reach their capacity limits, the network's ability to efficiently route data degrades rapidly. Packets are delayed or lost entirely, necessitating retransmissions that further exacerbate the congestion.

Consequently, data begins to queue—first at the network chokepoints, and inevitably at the sensor nodes themselves. The devices, equipped with limited local memory, are forced to buffer their outgoing data while waiting for bandwidth availability. This data queuing introduces significant latency into the system, fundamentally undermining the feasibility of real-time monitoring and rapid-response applications. If an array is deployed for early earthquake warning or critical industrial failsafe triggering, a queuing delay of even a few seconds can render the system entirely useless. Furthermore, persistent congestion drains the limited battery life of remote sensors, as their radio transmitters are forced to remain active for extended periods while negotiating network access and retransmitting lost packets.

The Edge Computing Paradigm

To resolve the fundamental failure of centralized architectures under heavy data loads, the paradigm must shift from moving data to the computation, to moving computation to the data. This is the core principle of edge computing. Edge computing distributes processing power, storage, and analytical capabilities directly to the extremities of the network—often onto the sensor nodes themselves or to localized edge gateways situated nearby.

By equipping the nodes within a dense large N array with sufficient localized computational power (such as microcontrollers or tailored AI accelerators like TPUs), the raw data can be ingested and processed immediately at the source. Instead of indiscriminately broadcasting a continuous stream of raw waveform or environmental data, the edge node runs localized algorithms, feature extraction models, or lightweight neural networks to interpret the signal concurrently with data acquisition.

Light Communication Models for Dense Arrays

Edge computing fundamentally alters the nature of the data transmitted over the network. Once the raw data is processed locally, the node no longer needs to send the full, heavy waveform. Instead, it only transmits the results of its computation: an inferred classification, a summarized statistical parameter, a compressed feature vector, or a critical event trigger. By transmitting only actionable insights or highly compressed metadata, edge computing radically reduces the payload size traversing the network.

This reduction in payload enables the implementation of light communication models, which are specifically designed to minimize overhead and optimize constrained bandwidth. Protocols built around the publish/subscribe (Pub/Sub) architecture, such as MQTT (Message Queuing Telemetry Transport), are exceptionally well-suited for edge-processed arrays. In a light communication model, sensor nodes establish a persistent, low-bandwidth connection and transmit small, asynchronous event messages to a central broker only when a significant change or target event is detected locally.

This event-driven telemetry entirely eliminates the continuous streaming of "empty" or baseline data that typically clogs the network. If a seismic sensor on the edge detects normal background noise, it processes this locally and remains silent on the network. Only when the localized model identifies an anomalous transient signal does it wake the radio and transmit a lightweight alert packet containing the event's features.

Solving Congestion and Enabling Scale

The synergistic combination of edge computing and light communication models elegantly solves the network congestion crisis plaguing dense large N arrays. By aggressively filtering and processing data at the source, the overall volume of network traffic drops by orders of magnitude. The bandwidth previously suffocated by raw data streams is freed up, ensuring that when critical event messages are transmitted, they propagate across the network with near-zero latency.

Consequently, data queuing is virtually eliminated. Because the payloads are small and infrequent, sensor nodes can rapidly dispatch their insights and return their radio transmitters to a low-power sleep state, dramatically extending the operational lifespan of remote battery-operated arrays. The network is no longer a bottleneck; it is returned to its intended function as a rapid, reliable conduit for critical information.

As the scale of sensor deployments continues to escalate into the millions, centralized cloud architectures will become increasingly untenable. For dense large N arrays, the migration toward edge computing is not merely an optimization—it is a mandatory architectural evolution. By processing heavily at the edge and communicating lightly over the network, researchers and engineers can finally decouple array density from network congestion, unlocking the true potential of massive, real-time sensing infrastructures.

Be the first to respond.