The first wave of Large Language Models (LLMs) fundamentally changed how we write, brainstorm, and code. However, for rigorous physical sciences like seismology, generating plausible-sounding text is remarkably insufficient. A seismologist does not need an AI to write a poem about an earthquake; they need an AI to download a month of continuous waveform data from an FDSN server, apply a 2Hz–80Hz bandpass filter, run a neural-network phase picker, associate the detected arrivals into discrete events, and generate a draft catalog with calculated magnitude residuals.

This leap—from pure text generation to autonomous execution—is the domain of Agentic AI. It represents a philosophical shift from models that merely predict to models that actually act. Agentic systems orchestrate tools, track intermediate outputs, debug their own runtime errors, and preserve reproducible traces.

What Makes an AI "Agentic"?

At its core, an Agentic AI system consists of a reasoning engine (the LLM) surrounded by a framework of executable tools (APIs, Python interpreters, database clients). When prompted with a high-level scientific objective, the agent does not immediately spit out an answer. Instead, it enters a ReAct (Reason + Act) loop.

It reasons about what it needs to accomplish the goal. It acts by calling the appropriate tool. It observes the tool's output, and then reasons again about the next step.

For example, if you ask an agentic system to "Compare the background noise profile between Station FNO (OK network) and Station SHAW (OK network) for the first week of January 2024," the agent will autonomously write the Python code using Obspy to fetch the data, compute the Probabilistic Power Spectral Densities (PPSD), plot the results using Matplotlib, save the figure, and return a written summary of the visual anomaly.

From Signals to Scientific Decisions

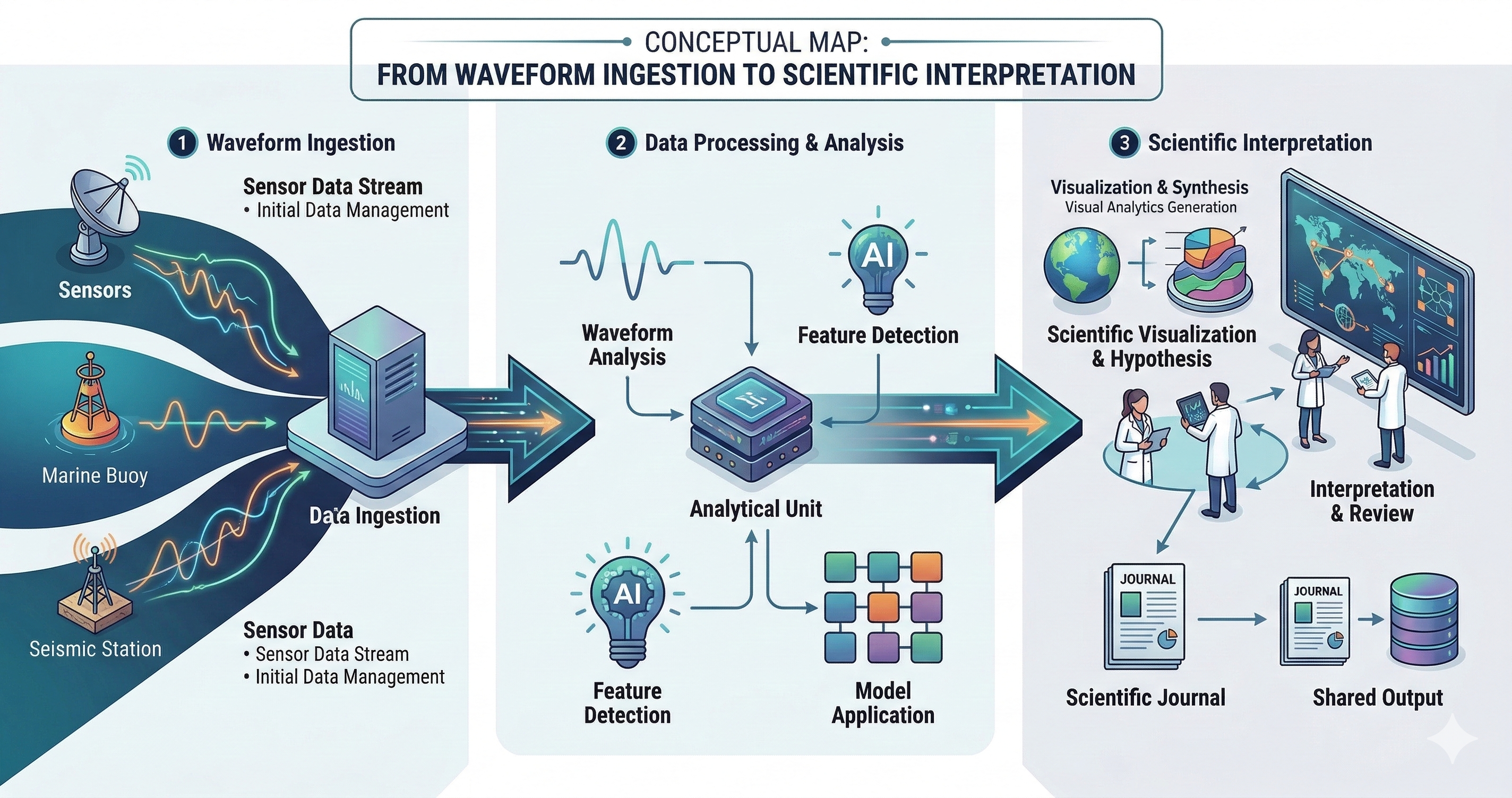

Seismology workflows traditionally combine rote data wrangling, advanced signal processing, cataloging, spatial modeling, and meticulous human interpretation. Agentic AI is perfectly positioned to dismantle the tedious bottlenecks in this pipeline.

Instead of replacing domain experts, well-scoped agents reduce the crushing burden of repetitive work across event triage, massive metadata checks, and literature mapping. Consider the workflow of reviewing thousands of microseismic detections generated by an automated deep-learning picker. An agent can be programmed to autonomously loop through every detection, download the corresponding waveform file, measure the Signal-to-Noise Ratio (SNR), flag events with overlapping coda waves that might confuse the algorithm, and present a sharply curated "Review Needed" dashboard to the human analyst.

The key value here is structured, deterministic assistance. The agent handles the ingestion, the quality control routing in a fixed format, and the feature extraction, while the human expert devotes their actual brainpower to final interpretation and analysis.

Designing for Trust and Reproducibility

The introduction of autonomous agents into scientific workflows naturally introduces deep anxiety regarding reproducibility. If an AI writes and executes code to clean a dataset, how can another research team reproduce the exact same cleaning process a year later, especially when the underlying LLM's weights may have been updated or deprecated?

Research-grade agentic systems absolutely must be designed with explicit provenance. In scientific contexts, the final answer an agent provides is fundamentally useless without the trace of how it got there. Modern agentic frameworks in science are moving toward generating Deterministic Tool Logs. When the agent finishes a task, it outputs a strict, version-controlled JSON ledger of every API call it made, the exact Python code it generated and executed, and the exact dependencies used in the sandbox.

This practice ensures that the agent's work is not a black box. A reviewer can simply rerun the executed Python script locally to verify the results perfectly, entirely bypassing the LLM.

The Human in the Semantic Loop

The ultimate goal of Agentic AI in seismology is not zero-human-intervention automation. The stakes in observational geophysics—particularly in hazard mitigation, early warning, and induced seismicity regulation—are far too high.

Instead, the goal is Human-in-the-Loop Orchestration. The agent acts as a junior researcher: it drafts the code, runs the analysis, creates the plots, and compiles the literature review. The human seismologist steps in at critical inflection points to review the plan, correct methodological errors, and approve the final interpretive framing. By automating the friction of scientific logistics, Agentic AI frees the seismologist to actually do science.

Be the first to respond.